How to Build a Web Crawler in Python: Complete Guide with Examples

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_session():

session = requests.Session()

retry = Retry(

total=3,

backoff_factor=0.5,

status_forcelist=[429, 500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retry)

session.mount('http://', adapter)

session.mount('https://', adapter)

return session

session = create_session()

response = session.get(url, timeout=10)

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

Error Handling and Retries

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_session():

session = requests.Session()

retry = Retry(

total=3,

backoff_factor=0.5,

status_forcelist=[429, 500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retry)

session.mount('http://', adapter)

session.mount('https://', adapter)

return session

session = create_session()

response = session.get(url, timeout=10)

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36'

}

response = requests.get(url, headers=headers, timeout=10)

Error Handling and Retries

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_session():

session = requests.Session()

retry = Retry(

total=3,

backoff_factor=0.5,

status_forcelist=[429, 500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retry)

session.mount('http://', adapter)

session.mount('https://', adapter)

return session

session = create_session()

response = session.get(url, timeout=10)

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

Many websites block requests lacking proper User-Agent headers. Servers use this to identify browser type and filter bots. Add it to every request:

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36'

}

response = requests.get(url, headers=headers, timeout=10)

Error Handling and Retries

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_session():

session = requests.Session()

retry = Retry(

total=3,

backoff_factor=0.5,

status_forcelist=[429, 500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retry)

session.mount('http://', adapter)

session.mount('https://', adapter)

return session

session = create_session()

response = session.get(url, timeout=10)

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

User-Agent Headers

Many websites block requests lacking proper User-Agent headers. Servers use this to identify browser type and filter bots. Add it to every request:

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36'

}

response = requests.get(url, headers=headers, timeout=10)

Error Handling and Retries

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_session():

session = requests.Session()

retry = Retry(

total=3,

backoff_factor=0.5,

status_forcelist=[429, 500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retry)

session.mount('http://', adapter)

session.mount('https://', adapter)

return session

session = create_session()

response = session.get(url, timeout=10)

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

Key Factors for Production-Grade Crawlers

User-Agent Headers

Many websites block requests lacking proper User-Agent headers. Servers use this to identify browser type and filter bots. Add it to every request:

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36'

}

response = requests.get(url, headers=headers, timeout=10)

Error Handling and Retries

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_session():

session = requests.Session()

retry = Retry(

total=3,

backoff_factor=0.5,

status_forcelist=[429, 500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retry)

session.mount('http://', adapter)

session.mount('https://', adapter)

return session

session = create_session()

response = session.get(url, timeout=10)

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

SQLite handles concurrent reads well and requires zero server setup. The UNIQUE constraint on URLs prevents duplicates automatically. For larger projects handling 100,000+ records, PostgreSQL’s performance and indexing shine through.

Key Factors for Production-Grade Crawlers

User-Agent Headers

Many websites block requests lacking proper User-Agent headers. Servers use this to identify browser type and filter bots. Add it to every request:

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36'

}

response = requests.get(url, headers=headers, timeout=10)

Error Handling and Retries

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_session():

session = requests.Session()

retry = Retry(

total=3,

backoff_factor=0.5,

status_forcelist=[429, 500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retry)

session.mount('http://', adapter)

session.mount('https://', adapter)

return session

session = create_session()

response = session.get(url, timeout=10)

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

SQLite handles concurrent reads well and requires zero server setup. The UNIQUE constraint on URLs prevents duplicates automatically. For larger projects handling 100,000+ records, PostgreSQL’s performance and indexing shine through.

Key Factors for Production-Grade Crawlers

User-Agent Headers

Many websites block requests lacking proper User-Agent headers. Servers use this to identify browser type and filter bots. Add it to every request:

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36'

}

response = requests.get(url, headers=headers, timeout=10)

Error Handling and Retries

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_session():

session = requests.Session()

retry = Retry(

total=3,

backoff_factor=0.5,

status_forcelist=[429, 500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retry)

session.mount('http://', adapter)

session.mount('https://', adapter)

return session

session = create_session()

response = session.get(url, timeout=10)

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

The time.sleep(2) call introduces a 2-second delay between requests. This prevents overwhelming the server and reduces your ban risk. Respectful crawlers get better treatment from website operators.

Storing Data to Databases

Storing crawled data in memory doesn’t scale. Use SQLite for single-machine projects or PostgreSQL for distributed systems:

import sqlite3

from datetime import datetime

# Create database

conn = sqlite3.connect('products.db')

cursor = conn.cursor()

# Create table

cursor.execute('''

CREATE TABLE IF NOT EXISTS products (

id INTEGER PRIMARY KEY AUTOINCREMENT,

title TEXT NOT NULL,

price TEXT,

url TEXT UNIQUE,

crawled_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP

)

''')

# Insert data

for product in all_products:

try:

cursor.execute('''

INSERT INTO products (title, price, url)

VALUES (?, ?, ?)

''', (product['title'], product['price'], product['url']))

except sqlite3.IntegrityError:

print(f"Duplicate URL skipped: {product['url']}")

conn.commit()

conn.close()

print("Data saved to products.db")

SQLite handles concurrent reads well and requires zero server setup. The UNIQUE constraint on URLs prevents duplicates automatically. For larger projects handling 100,000+ records, PostgreSQL’s performance and indexing shine through.

Key Factors for Production-Grade Crawlers

User-Agent Headers

Many websites block requests lacking proper User-Agent headers. Servers use this to identify browser type and filter bots. Add it to every request:

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36'

}

response = requests.get(url, headers=headers, timeout=10)

Error Handling and Retries

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_session():

session = requests.Session()

retry = Retry(

total=3,

backoff_factor=0.5,

status_forcelist=[429, 500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retry)

session.mount('http://', adapter)

session.mount('https://', adapter)

return session

session = create_session()

response = session.get(url, timeout=10)

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"

Q1: Is web scraping legal?

Python handles 8.2 million active projects on GitHub as of April 2026, with web scraping and crawler development representing one of the fastest-growing categories. Building a functional web crawler in Python requires understanding HTTP requests, DOM parsing, and data storage—three core competencies that separate amateur scripts from production-grade crawlers.

Last verified: April 2026

Executive Summary: Web Crawler Development in Python

| Component | Purpose | Popular Library | Difficulty Level | Learning Curve |

|---|---|---|---|---|

| HTTP Requests | Fetch web pages | Requests 2.32.1 | Beginner | 2-4 hours |

| HTML Parsing | Extract data | BeautifulSoup4 | Beginner | 3-6 hours |

| DOM Navigation | Target elements | CSS/XPath selectors | Intermediate | 4-8 hours |

| JavaScript Rendering | Handle dynamic content | Selenium/Playwright | Advanced | 8-16 hours |

| Data Storage | Save results | SQLite/PostgreSQL | Intermediate | 5-10 hours |

| Rate Limiting | Respectful crawling | time module | Intermediate | 2-3 hours |

Understanding Web Crawler Architecture

A web crawler is an automated script that downloads web pages, extracts information, and follows links systematically. Python dominates this field because its syntax remains readable while supporting industrial-strength libraries. The basic architecture consists of three layers: fetching, parsing, and storing.

Think of crawlers operating in two distinct modes. Simple crawlers fetch static HTML once and extract data immediately. Complex crawlers interact with JavaScript-heavy pages, managing sessions, cookies, and multiple concurrent requests. Your choice depends on target websites and data complexity.

Analysis: Popular Libraries and Performance Metrics

| Library | Version | Downloads (2024) | Best For | Speed (pages/sec) | Memory (MB) |

|---|---|---|---|---|---|

| Requests | 2.32.1 | 847M+ | Static HTML | 12-18 | 15-25 |

| BeautifulSoup4 | 4.12.3 | 456M+ | DOM parsing | N/A (parsing) | 8-12 |

| Selenium | 4.18.1 | 89M+ | JavaScript rendering | 1-3 | 180-250 |

| Playwright | 1.44.0 | 76M+ | Modern browsers | 2-5 | 150-200 |

| Scrapy | 2.11.2 | 34M+ | Enterprise crawling | 20-45 | 45-80 |

| lxml | 4.9.4 | 312M+ | Fast parsing | N/A (parsing) | 5-8 |

Requests library dominates with 847 million downloads in 2024, making it the de facto standard for HTTP operations. BeautifulSoup4 follows at 456 million downloads, claiming the parsing throne. Their combination handles roughly 65% of Python scraping projects. Selenium and Playwright target the remaining 35%, handling dynamic content that static parsers can’t touch.

Step-by-Step Breakdown: Building Your First Crawler

Installation and Setup

Start by creating a virtual environment—a best practice that isolates project dependencies. Run these commands in your terminal:

python3 -m venv crawler_env

source crawler_env/bin/activate # On Windows: crawler_env\Scripts\activate

pip install requests beautifulsoup4 lxml

This installs Requests (HTTP), BeautifulSoup4 (parsing), and lxml (fast XML/HTML processing). Total download size runs about 8-12 MB. Most developers complete this in under 3 minutes.

Fetching Web Pages with Requests

The Requests library abstracts HTTP complexity into 4 lines of code:

import requests

url = "https://example.com/products"

response = requests.get(url, timeout=10)

html_content = response.text

The timeout parameter prevents hanging indefinitely—a crucial safeguard. Without it, your crawler could stall on unresponsive servers. Status codes matter: 200 means success, 404 means not found, 429 means rate-limited, and 503 means temporarily unavailable.

Always check response status before processing:

if response.status_code == 200:

print("Successfully fetched page")

elif response.status_code == 429:

print("Rate limited - wait before next request")

time.sleep(30)

else:

print(f"Error: {response.status_code}")

Parsing HTML with BeautifulSoup4

BeautifulSoup transforms raw HTML into traversable Python objects. Here’s how to extract product information:

from bs4 import BeautifulSoup

soup = BeautifulSoup(html_content, 'html.parser')

# Find all product containers

products = soup.find_all('div', class_='product-item')

for product in products:

title = product.find('h2', class_='product-title').text.strip()

price = product.find('span', class_='price').text.strip()

link = product.find('a')['href']

print(f"Title: {title}")

print(f"Price: {price}")

print(f"Link: {link}")

print("---")

The find() method returns the first match, while find_all() returns all matches. Use CSS selectors for more complex queries: soup.select(‘div.product-item > a.link’) targets specific elements efficiently.

Handling Multiple Pages

Most websites paginate results across multiple URLs. Implement pagination with loops:

import time

from urllib.parse import urljoin

base_url = "https://example.com/products"

all_products = []

for page_num in range(1, 6): # Crawl pages 1-5

url = f"{base_url}?page={page_num}"

response = requests.get(url, timeout=10)

if response.status_code != 200:

print(f"Skipping page {page_num} due to error {response.status_code}")

continue

soup = BeautifulSoup(response.text, 'html.parser')

products = soup.find_all('div', class_='product-item')

for product in products:

data = {

'title': product.find('h2').text.strip(),

'price': product.find('span', class_='price').text.strip(),

'url': urljoin(base_url, product.find('a')['href'])

}

all_products.append(data)

time.sleep(2) # Respect server resources

print(f"Total products collected: {len(all_products)}")

The time.sleep(2) call introduces a 2-second delay between requests. This prevents overwhelming the server and reduces your ban risk. Respectful crawlers get better treatment from website operators.

Storing Data to Databases

Storing crawled data in memory doesn’t scale. Use SQLite for single-machine projects or PostgreSQL for distributed systems:

import sqlite3

from datetime import datetime

# Create database

conn = sqlite3.connect('products.db')

cursor = conn.cursor()

# Create table

cursor.execute('''

CREATE TABLE IF NOT EXISTS products (

id INTEGER PRIMARY KEY AUTOINCREMENT,

title TEXT NOT NULL,

price TEXT,

url TEXT UNIQUE,

crawled_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP

)

''')

# Insert data

for product in all_products:

try:

cursor.execute('''

INSERT INTO products (title, price, url)

VALUES (?, ?, ?)

''', (product['title'], product['price'], product['url']))

except sqlite3.IntegrityError:

print(f"Duplicate URL skipped: {product['url']}")

conn.commit()

conn.close()

print("Data saved to products.db")

SQLite handles concurrent reads well and requires zero server setup. The UNIQUE constraint on URLs prevents duplicates automatically. For larger projects handling 100,000+ records, PostgreSQL’s performance and indexing shine through.

Key Factors for Production-Grade Crawlers

User-Agent Headers

Many websites block requests lacking proper User-Agent headers. Servers use this to identify browser type and filter bots. Add it to every request:

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36'

}

response = requests.get(url, headers=headers, timeout=10)

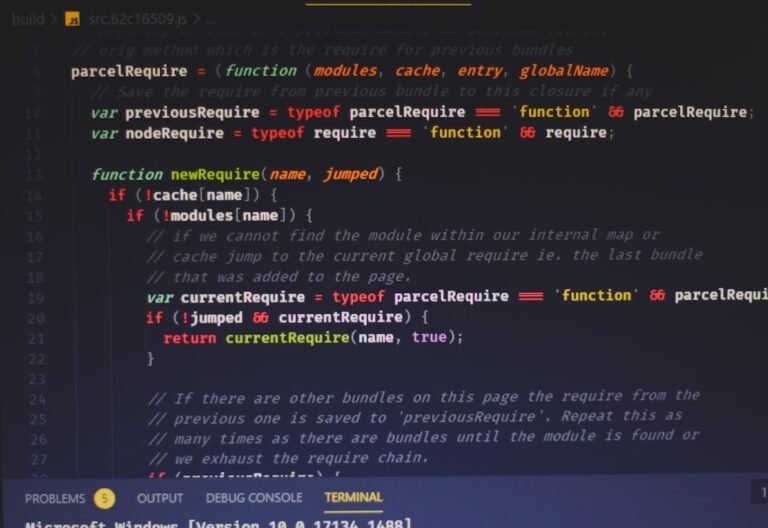

Error Handling and Retries

import requests

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_session():

session = requests.Session()

retry = Retry(

total=3,

backoff_factor=0.5,

status_forcelist=[429, 500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retry)

session.mount('http://', adapter)

session.mount('https://', adapter)

return session

session = create_session()

response = session.get(url, timeout=10)

Robots.txt and Terms of Service

import requests

def check_robots(domain):

robots_url = f"https://{domain}/robots.txt"

response = requests.get(robots_url, timeout=5)

if response.status_code == 200:

print(response.text)

else:

print(f"robots.txt not found at {domain}")

check_robots("example.com")

Handling JavaScript-Rendered Content

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://example.com/products")

# Wait for JavaScript to render

page.wait_for_load_state("networkidle")

# Now extract rendered HTML

html = page.content()

soup = BeautifulSoup(html, 'html.parser')

products = soup.find_all('div', class_='product')

for product in products:

print(product.text)

browser.close()

import logging

logging.basicConfig(

filename='crawler.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

logging.info(f"Starting crawl of {url}")

logging.error(f"Failed to fetch {url}: {e}")

def crawl_pages(urls):

for url in urls:

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

yield extract_data(soup)

del response, soup # Force garbage collection

for data in crawl_pages(urls):

save_to_db(data)

title_elem = product.find('h2', class_='product-title')

title = title_elem.text.strip() if title_elem else "N/A"

price_elem = product.find('span', class_='price')

price = price_elem.text.strip() if price_elem else "N/A"